all posts (default)

general

bash scripting

mac

plain text

reading

travel

windows

www

Info:

©Jim Brown

Weblog (or Blog)

Monday, May 30, 2022

Blog No Longer Updated

I'm leaving this blog posted for reference to the information it has. Some of it, especially that related to using computers, the Windows operating system, and plain text, is particularly good. Feel free to email me with any comments or questions.

posted at: 11:16 | path: /general | permanent link

Sunday, May 29, 2022

It's Been Fun

I've had a site on the web since late 1997. Much of the time that site included a blog or blog-like page, mostly about Clemson football or using a Windows computer. I've occasionally posted opinions on other topics, usually politics, but by and large, I did not do it consistently enough to develop any sort of a following.

I used to spend a lot of time developing my website, playing with different layouts and graphics. I learned how to use SSH to maintain it, which was a challenge for someone who has used Windows all his work and personal life and is self-taught on computers.

But, I don't post much anymore and I question whether it's worth the $50 dollars or so I spend every year to maintain the domain name and website. There are plenty of free alternatives for email. There is even free web hosting if I feel the need for a presence on the web, for example, https://jcb37310.github.io.

So, for now, this is goodbye. It will be a while before jcby.com goes away—I am paid up through 2023, but this will be my last post here...until it isn't.

posted at: 12:01 | path: /general | permanent link

Thursday, March 31, 2022

Global Economics After Ukraine

An interesting and comprehensive look at what the future of globalization might look like after Putin's invasion of Ukraine. To my limited knowledge, this article at least tries to look back at history and project what the ultimate meaning of Ukraine in terms of the global economy might look like.

posted at: 06:48 | path: /general | permanent link

Wednesday, February 23, 2022

Lenovo ThinkBook 13s

Two years ago I posted about a new computer I had gotten. I was excited about it because it was powered by an Intel i7 8th Generation and I had never had a Windows machine with that kind of power. I had also used ThinkPads for years and loved their construction and especially their keyboard. I said in a February 2020 post that I would write a review of the new computer. This is the review.

Why wait 2 years to write a review? Because for about 20% of that time, it's been out of service due to various problems. It's finally stable enough to use, although there are some issues, and it is an okay machine--just okay.

Pros

- Fast

- Runs Windows 11

Cons

- Began acting up almost out of the box. Biggest problem was that it would not wake from sleep. Sent to Lenovo Repair Depot. Came back 49 days (!) later with new motherboard.

- Still lots of problems. Lenovo had trouble with its Repair Depot so switched me to Onsite Service.

- Three months, 2 more motherboards, and 2 new trackpads later, computer seems to run okay.

- However, trackpad is too sensitive even on lowest sensitivity setting. Cursor jumps when typing. Hovering finger even a half inch above trackpad initiates a tap.

- Two keys are sticking frequently and one won't engage its base and sticks up above other keys, even though it's still hanging on there (i.e., it has not come completely loose).

So after all the maintenance its an okay machine but hard to use because of the keyboard and trackpad issues. I would not recommend this machine or any of its successors that share the same body and keyboard (my version has been discontinued after only two years in production).

posted at: 08:10 | path: /general | permanent link

Wednesday, December 22, 2021

What is Wrong with Republicans?

Trump got booed by his supporters when he acknowledged he got the booster vaccine. What is wrong with these people? Why do they think this thing that's good for their health (and everyone's) is a political issue? I used to be a Republican. Now I think they have lost their minds--and any integrity they may have had.

posted at: 10:38 | path: /general | permanent link

Wednesday, September 22, 2021

So Wrong!

The Charleston Post and Courier has an opinion column today saying that SC should spend money to entice people to get the COVID-19 vaccine. That is so wrong. Why should you pay people who make a stupid decision not to get vaccinated? SC should pass a law requiring vaccines.

posted at: 07:33 | path: /general | permanent link

Friday, September 17, 2021

9/11

If you only read one article about the 20th anniversary of September 11, 2001, read this one.

posted at: 17:57 | path: /general | permanent link

Sunday, August 08, 2021

Can You Change Your Mind?

David French, a senior editor of The Dispatch, a columnist at Time, and the author of “Divided We Fall,” reviewed Andrew Sullivan's book, "Out on a Limb" in today's New York Times. His review causes me to want to read the book.

But French said something in his review that struck a chord with me:

"This world is almost impossibly complex. Conventional wisdom is frequently wrong. No partisan side has a monopoly on truth. In these circumstances, a nation needs writers and thinkers who will say hard things, whose fearlessness gives you confidence that you’re hearing their true thoughts."

posted at: 12:26 | path: /general | permanent link

Friday, June 04, 2021

Land of the Free???

Peggy Noonan tries to explain why the world is going crazy.

posted at: 11:27 | path: /general | permanent link

Thursday, January 21, 2021

Who Is Guy P. Harrison?

My post "Why QAnon Can Exist" cites an essay I found riveting and eminently relevant for today's world. It caused me to wonder who is Guy Harrison and what else has he written? Several excellent essays are linked there. Enjoy.

posted at: 16:25 | path: /general | permanent link

Why QAnon Can Exist

Wow, what an essay here! Read it, study it, act on it!

posted at: 16:12 | path: /general | permanent link

Friday, January 08, 2021

Insurrection and Sedition

It is still hard to believe that the President of the United States, who took an oath to defend the Constitution, incited a mob to attack our Capitol. As usual, Peggy Noonan expresses my thoughts better than I could.

posted at: 08:34 | path: /general | permanent link

Tuesday, November 24, 2020

Remember This Next Year

If the economy turns toward recession next year, remember this opinion article from the NY Times that talks about actions taken by Trump's Treasury in the lame-duck days of Trump's administration. I was once a proud Republican. But I'm ashamed of Republicans' support of Trump.

posted at: 08:42 | path: /general | permanent link

Sunday, November 01, 2020

Reading for the Undecided Voter

Two articles that every American ought to read. Maria Popova on Theodore Roosevelt's view of a good citizen and Peggy Noonan on why she will cast her vote for Edmund Burke.

posted at: 05:33 | path: /general | permanent link

Monday, August 24, 2020

Election Coming

Another excellent article that Trump supporters ought to read. Which, of course, they will not do.

posted at: 07:38 | path: /general | permanent link

Monday, May 18, 2020

Reading Before November

If you plan to vote in the 2020 presidential election (and I hope you do), you should read this article from Vox. It is about Trump and it is not positive. I tell you that so that you won't waste your time if you have decided to vote for Trump and nothing will change your mind. But maybe, just maybe, you are the very one who ought to read it.

posted at: 14:20 | path: /general | permanent link

Monday, May 11, 2020

COVID-19 versus Flu

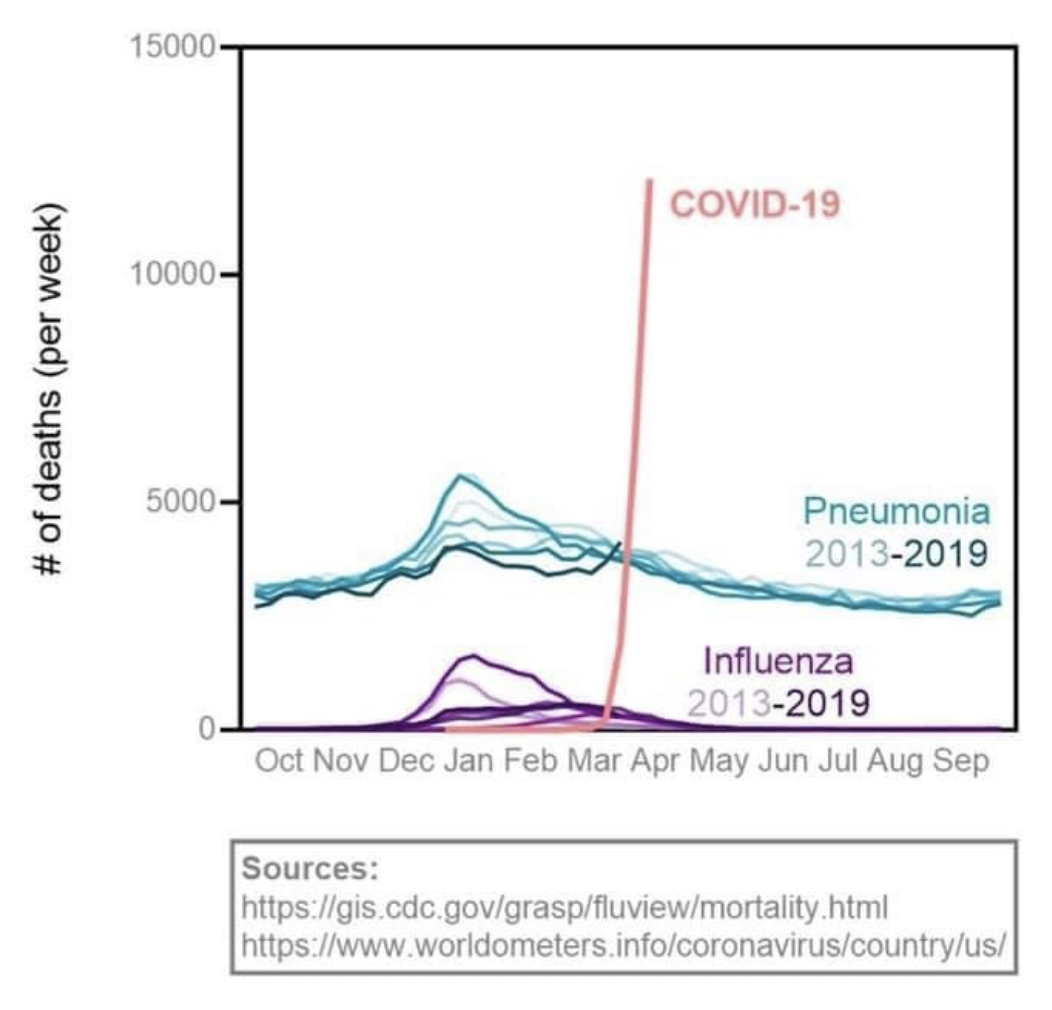

You see a lot of nonsense on the web about COVID-19 and the seasonal flu. If you can read a graph, the image below ought to scare the crap out of you.

The image comes from one of Erin Bromage's blog posts. He is a trained and experienced infectious disease professor, so he is worth reading. In fact, his post on how SARS-CoV2 (a/k/a coronavirus) can spread in a community has gone viral because he is knowledgeable and writes clearly and thoughtfully.

posted at: 09:44 | path: /general | permanent link

Friday, May 08, 2020

Fake News?

At one time in America, when you listened to the evening news or read the daily newspaper, you could count on a clear distinction between news and editorials. You could count on almost all reporters to stick to facts or clearly state when they were quoting someone's opinion. No more.

To while away the time in grocery lines, I usually scan the covers of publications racked there. Years ago, I marveled that any sane person would buy the tabloids such as the National Enquirer as the stories were obviously not true. I now realize the tabloids have won. Mainstream news outlets resemble tabloids more than they do newspapers of 30 years ago. All media today compete for time (and clicks) with the uncontrolled opinion-spewing of social media. As a result, headlines--and even content--get more and more misleading.

How do you find news that you can rely on to give you the real story in this day and time? Two ways. One, keep up with the names of reporters you read, find ones that you believe give you the real story and read those more than others. Two, read news from multiple outlets. For example, if you're a conservative who usually listens to Fox News, tune to CNN to get the liberal view. And vice versa.

For the first way, if you are a conservative, as I am, I recommend Peggy Noonan. Although her columns are editorial, she underpins her opinions with fact. I also happen to agree with her opinions (usually).

For the second, a good web site that makes it easy to compare the news as reported from different viewpoints is Allsides. They attempt to characterize outlets as left, center, right and present the same story as reported by different outlets. It's not an exact science, but it makes it easier to compare viewpoints.

posted at: 09:17 | path: /general | permanent link

Saturday, April 11, 2020

Testing for COVID-19

What part of "people without symptoms can spread the disease" does Trump not understand when he says we do not need more testing?

posted at: 12:57 | path: /general | permanent link

Thursday, April 09, 2020

Kiplinger Article

Kiplinger's Personal Finance web site has an April 6 article entitled Filing My Taxes Early Cost Me My Stimulus Check. It's a technically accurate headline, but I hate headlines that are clearly designed as "click bait," worded to play on current fears to entice someone to check out the article. This one plays on the current concern about ensuring that you receive your fair share of the individual checks the U.S. government will be sending out ("the stimulus check"). Why do I feel as if this headline is "click bait"? Because it only tells one side of the story. Filing your taxes early could result in your getting a stimulus check that you would not have gotten otherwise. If your 2018 income was more than your 2019 income and you file early, your eligibility for a check will be based on your lower 2019 income. The author of the Kiplinger article just happened to have a higher 2019 income. His article does explain all the possible outcomes of when you file relative to the level of income in 2018 and 2019. But a fairer headline would have been, When You File Your 2019 Taxes May Affect Your CARES Stimulus Check.

posted at: 06:54 | path: /general | permanent link

Monday, February 03, 2020

New Computer

Excitement personified. I'm getting a new computer tomorrow, a Lenovo Thinkbook 13s. I've used Thinkpads forever. As I understand it, the Thinkbook is like a poor man's Thinkpad. I'll write about it after I get it and use it for a while.

My primary computer is my Macbook Pro. But I do some work that requires a Windows machine. When my Lenovo Twist died in 2018, I bought a "cheap" HP, thinking that I did not need much in the way of performance for the little work I did in Windows. Wrong! I've gotten too used to the speed of good laptops, so the HP seemed to crawl at times. It is a good computer, but with a Pentium processor and spinning disk hard drive, it was just too slow. So when Lenovo put the 2019 Thinkbook on clearance sale, it was just too good a deal to pass up.

The HP will be moved to being the file and print server on my home network. The computer that served that purpose, a Dell Inspiron 530 at 12 years old, will be retired.

posted at: 17:54 | path: /general | permanent link

Tuesday, May 21, 2019

New Search

I've added a new search box to each page on the site. I had been using Google's Custom Search. Of course the price for using Google is that it reserves the right to put ads in the search results. Also, Google only re-indexes the site on its schedule and new content may not have been indexed when you are looking for it. Now I'm using a Perl script hosted on my own site, so search is fast, always up to date, and the results have no ads.

posted at: 17:48 | path: /general | permanent link

Saturday, April 27, 2019

Still Spending

Back in July of 2011, I blogged about government spending versus tax receipts. The article that I linked to in that post no longer exists, so I went looking for an update of the key graph. You can review the updated graph of tax receipts and spending as a percent of gross domestic product. Note that since the recession in 2008, our government constantly spends more than it receives. Is this any way to run a "business"?

posted at: 19:20 | path: /general | permanent link

Tuesday, January 23, 2018

Who Were the Original Programmers?

With all our devices today--computers, tablets, smartphones--we depend on computer programmers to write the code that allows us to do incredible, complex things. The world now seems to run on computer code. What do you see in your mind's eye when you think about a computer programmer? I'm guessing most of us visualize a young man, mathematically inclined, who might tend to be a loner, spending days and nights on end pounding a keyboard to turn out some new app or game. That is probably a reasonable picture of today's primary computer programmer. But it may surprise you to learn that the original computer programmers were all women.

At the beginning of World War II, the demand for artillery firing tables was increasing exponentially. Firing tables tell the artillery gunner in the field how to aim his gun to hit a distant target. For every angle of elevation of the gun barrel, every wind speed, every range to the target, there were numerous trajectories that an artillery shell could take.

Each of these trajectories required thousands of calculations to produce a firing table for a particular type of gun. The U.S. Army decided to hire women to make these calculations (which were viewed as "clerical" work and more suitable to women than men) and embarked on a nationwide recruitment program for college-educated, mathematically-talented women. They came to be called "computers" and hundreds of them manned hand adding machines in large rooms to produce the firing tables.

The problem was that it took too long to solve the differential equations to calculate the trajectories using adding machines. So the Army funded a project at the University of Pennsylvania to build an electronic calculator to produce the firing tables faster. The calculator, the first electronic computer, was called ENIAC (electronic numerical integrator and computer). It was designed and built by John Mauchly and Pres Eckert and the team of engineers and technicians at Penn.

After ENIAC was built, it still had to be "programmed," set up to solve the equations for the trajectories of an artillery shell. There were no instruction books on how to program it. More difficult, programming was accomplished by setting switches and plugging wires so that the machine processed the math in the correct sequence. The programming was turned over to six of the "computers" who had been calculating the firing tables by hand: Kay McNulty, Betty Jennings, Betty Snyder, Marlyn Meltzer, Fran Bilas, and Ruth Lichterman.

The women were given blueprints of ENIAC's wiring and charged with figuring out how to produce firing tables from this monster (ENIAC weighed 30 tons and occupied a 30x50 foot room) of a computer. And they did, becoming the first computer programmers and developing many of the basic techniques still used today in modern programming languages, such as COBOL, developed by another woman, Grace Hopper.

Sadly, after the war, when the amazing accomplishments of ENIAC were publicized, the women who had made it work were not mentioned. They were not even invited to the dinner celebrating the success of ENIAC, even though they stayed long into the night to fix a glitch that threatened to derail a successful demonstration to the Army brass.

These women led the way for other female programmers. Indeed, into the 1960s, most computer programmers were women. Who knows, if the pioneering work of the "ENIAC Girls" had been widely publicized to serve as role models and inspiration, that may still be the case today.

posted at: 09:25 | path: /general | permanent link

Thursday, September 14, 2017

Wikipedia

I was born in the 1940s, long before the Internet came into being to supply information. For someone like me, who loved to read and wanted information about everything, there was a means. Door-to-door salesmen walked the neighborhoods, selling encyclopedias: Britannica, Compton's, World Book, Funk & Wagnalls. I was lucky enough that my parents wanted me to have access to information and bought a set of Compton's, which I assume was the cheapest since my parents were cotton-mill workers. Oh, the wonderful hours I spent poring over every volume in that set. I still like to learn about new things. Today I use the World Wide Web over the Internet. Specifically, I use the web site Wikipedia.

Wikipedia is an online encyclopedia. You can find it at http://wikipedia.org. There are two amazing things about Wikipedia. First, it is free. Second, it is written by us, the public. Anyone can contribute an article on any subject or edit information that is already published on the web site.

You would think that this would lead to volumes of inaccurate or intentionally misleading information, but it doesn't, at least not for mainstream topics such as medicine, history, arts, computers, technology, and others. Here's why: Wikipedia, like other wiki sites on the web (there are many) is based on software named wiki by its author. That software allows one to edit a wiki site web page directly through a web browser (any browser, such as Chrome, Firefox, Safari, or Internet Explorer). For every active wiki site on the Internet, there develops a community of users that contribute and edit the information. They take pride in accuracy and completeness. Many strive for a "neutral point of view," Wikipedia's guiding principle. The community won't let inaccurate and misleading information added by a user remain posted for long. In research to write this article, I came across a challenge to anyone who doubts this. The author suggested that you add intentionally inaccurate information to any mainstream Wikipedia topic and monitor your posting. He predicted that within 10 minutes a Wikipedia community member would correct it.

You may wonder what this wiki software is. Wiki was invented by Ward Cunningham in 1995. The word "wiki" is Hawaiian for quick and Cunningham was looking for a word to call his software that conveyed how quickly web pages could be created and edited with it. He published the first wiki web site, for computer programmers to collaborate, at http://wiki.c2.com. It's still there, although active editing stopped in 2015. Editing directly on the web is exactly the collaboration that Tim Berners-Lee envisioned when he invented the World Wide Web in 1991. Berners-Lee's first simple browser included editing capability. He did not have the time, however, to produce a full-featured browser. When Marc Andreessen wrote the first one, Mosaic (later Netscape), he focused on publishing, not collaboration.

Wikipedia is the largest wiki ever. The English language version has over five million articles, on virtually any topic you could want information about. Over 1,200 volunteer administrators keep watch over Wikipedia. On December 2, 2016, the Wikipedia site showed about 125,000 "active users," defined as users who had made an edit to a article in the past 30 days.

Several studies of the accuracy of Wikipedia's entries have been made. For mainstream topics such as medicine and technology, those studies have found Wikipedia to be as accurate as other encyclopedias. Even schools and universities are beginning to accept Wikipedia as accurate enough to cite. In any event, every Wikipedia page has citations at the bottom so you can double-check the information if you need to. All in all, Wikipedia is a good starting point to learn about any topic.

posted at: 12:02 | path: /general | permanent link

Saturday, May 13, 2017

Apple versus Microsoft--a Little History

In 1981, when Microsoft provided the operating system for the original IBM Personal Computer (PC), it retained the right to sell that operating system to other computer manufacturers. Thus was born the PC clone market. Many manufacturers entered the market and by the mid-1980s, inexpensive PC clones dominated the hardware side of the market. Microsoft dominated the software side. That basic fact -- that Microsoft is a software company and Apple is a hardware company -- accounts for the basic differences between PCs and Macs.

Apple co-founder Steve Jobs said, "Apple strives for the integrated model so that the user isn't forced to be the systems integrator." This meant Apple designed its computers from the ground up to adhere to certain standards and to operate the computer a certain way. As a result, Apple hardware is better built and more attractive than PC hardware, but is generally more expensive.

Because it provided its own software (both the MacOS operating system and basic applications), tailored to its hardware, Apple could provide a more stable system. PCs running Windows had to live in the world of device drivers to provide the interface between the Windows operating system and the myriad of different hardware configurations that the PC manufacturers produced. As a result, Macs do not crash as frequently as Windows PCs. Also as a result, however, PCs evolve more rapidly because of the competition among all those hardware manufacturers. A prime example is gaming. Windows PCs dominate the gaming market because of quick development and deployment of improved video adapters.

Because Steve Jobs believed he knew what users needed, Apple computers usually give you one way to perform a given action. Windows, on the other hand, gives you multiple ways. A Windows PC is highly customizable, a Mac not so much. Customization is a two-edged sword. As a result of all the options, Windows is more difficult to learn than macOS. However, if the Mac's way does not seem intuitive to you -- although Apple does a good job of making most basic actions intuitive -- you have fewer options to change it.

posted at: 10:45 | path: /general | permanent link

Monday, February 13, 2012

Definition of Capitalism

An amazing story from China.

posted at: 09:41 | path: /general | permanent link

Thursday, December 08, 2011

Can Anyone Create Jobs?

One of the most coherent, logical newspaper articles I've read in some time. Recommended reading.

posted at: 14:41 | path: /general | permanent link

Friday, July 22, 2011

It's Really Pretty Simple

The title is talking about defining the problem, not the solution, to our national debt. The solution is tied up with political maneuvering by the same politicians that have ignored the clearly-approaching problem for 30 years. This article summarizes it well. For the past 60 years, the government has averaged receipts of 18 to 19 percent of GDP. Therefore, we cannot average more than that in spending or we add to the already unacceptably large deficit. In 2010, we spent 25% of GDP. Continue that and the defaults that are being talked about as possibilities come next Wednesday become the norm and the U.S. is on its way to becoming a second-rate nation.

posted at: 11:23 | path: /general | permanent link

Friday, June 03, 2011

Models

I spent almost 30 years in environmental affairs and remediation work for a corporation. Many times I railed at the money industry had to spend based on a model that some agency or consultant had constructed, many times without needed data and with questionable assumptions and inputs. Now, in retirement, I'm worried about this country's future given the fiscal mess we're in. So I was transported back in time when I read this article about models used to assess the impact of cuts in spending or increases in taxes. Deja vu all over again. I have no idea if the author of this article knows what she's talking about. But there's enough questions in there to make you wonder if anyone really understands what we can do and what the consequences will be when we do it. Go read it.

posted at: 14:38 | path: /general | permanent link

Saturday, May 28, 2011

What is Valid?

When I think of all the resources that have been squandered based on "new scientific findings" it makes my stomach ache. Don't get me wrong, science and technology have been the very foundation of this wonderful life that we now enjoy. But when you study something as complex as human health or the environment, you can gather such a small part of the data that influences the systems, it is easy, too easy, to draw the wrong conclusion. Then we're spending resources on the wrong thing. This post from Stephen Bainbridge talks about a recent "correction" of past conclusions. Here is his concluding paragraph:

"The point is not that we should ignore environmental concerns. The point is that we should be wary about claims that massive social and economic changes are necessary simply because the scientific consensus of the moment claims they're desirable. Like the medical claims about salt I mentioned in my earlier post, and like this latest news, the consensus of the moment can turn out to be seriously flawed."

posted at: 07:16 | path: /general | permanent link

Monday, April 11, 2011

The Problem with Social Security

Other than the fact that it was originally passed to be an emergency measure to keep people from starving and has had significant payment and tax increases over the years, the basic problem is life expectancy. In 1935 when Social Security was passed, the average life expectancy at birth of males (who constituted most of the workforce at that time) was 60 years. In other words, the Congressmen who passed it did not expect most workers to live to collect a dime since the qualification age was 65 years. Now life expectancy at birth is about 78 years. So we went from few collecting benefits to almost all of us receiving benefits for 13 years on average and at increased payouts. No wonder it's sopping up more and more of our country's revenue every year! Time for a change!

posted at: 18:17 | path: /general | permanent link

Wednesday, January 26, 2011

Can the U.S. Survive?

Robert saw it long ago:

"The America of my time line is a laboratory example of what can happen to democracies, what has eventually happened to all perfect democracies throughout all histories. A perfect democracy, a 'warm body' democracy in which every adult may vote and all votes count equally, has no internal feedback for self-correction. It depends solely on the wisdom and self-restraint of citizen...which is opposed by the folly and lack of self-restraint of other citizens. What is supposed to happen in a democracy is that each sovereign citizen will always vote in the public interest for the safety and welfare of all. But what does happen is that he votes his own self-interest as he sees it...which for the majority translates as 'Bread and Circuses.'

'Bread and Circuses' is the cancer of democracy, the fatal disease for which there is no cure. Democracy often works beautifully at first. But once a state extends the franchise to every warm body, be he producer or parasite, that day marks the beginning of the end of the state. For when the plebes discover that they can vote themselves bread and circuses without limit and that the productive members of the body politic cannot stop them, they will do so, until the state bleeds to death, or in its weakened condition the state succumbs to an invader -- the barbarians enter Rome."

--Robert A. Heinlein

posted at: 12:44 | path: /general | permanent link

Tuesday, December 14, 2010

A Simple Life

The key to living a simple life is consistency. The key to consistency is self-discipline. But why is it so hard?

posted at: 13:10 | path: /general | permanent link

Friday, November 26, 2010

Making a Presentation

I spent 40 years in a corporate environment. Early on I realized that making an effective presentation to a group was key to my ability to do a good job. By communicating clearly, I could provide co-workers with the information they needed to do a good job, especially on my projects, and managers with recommendations they needed to support their job, and perhaps my advancement. So I worked hard at it.

In the late 1960s, when I began my career, using transparencies with overhead projectors was the way to illustrate your points. At first, you used the transparency interactively -- you wrote on it during the presentation with a wax pencil. This "evolved" to preparing bullet lists before the presentation that would be shown on the screen. Then, in the mid-1980s, PCs came in and the production of bullet lists became "beautified." You made your lists in a word processor and printed the transparencies, all in black and white. As PCs progressed, clip art, color, different fonts, different viewpoints became possible and the transparencies got fancier and fancier. Then the overhead projectors were replaced by computer-driven projectors and computer applications to make the slides took over, allowing you to produce animated slides with color, thousands of fonts, and even sound. So the presentations began to be the focus and they got even fancier and fancier. It took a while to realize that they were also getting muddier and muddier, with the message being further subordinated to the exoticness of the presentation.

Today, and for the last 20 years, PowerPoint is the ruler of the slide-making software. You can now download complex templates to help you make any point you want in as fancy and complex manner as you want. The question is, should you want?

I came to understand late in my career that PowerPoint hurts more than it helps in making an effective presentation that your audience will remember. No less an authority than Edward Tufte makes a damning case against the way PowerPoint is usually used. But it does not have to be used this way. PowerPoint can be effective if you follow a few rules.

- People remember what you say, not what you put on the slide. Say it clearly and enthusiastically.

- A slide should have no more information on it than your audience can comprehend in less than 5 seconds.

- Use your slides for concise bullet points or simple data graphs that illustrate what you are talking about.

- Use animations sparingly. An effective use of PowerPoint animinations is to highlight the point you are currently talking about while you dim the other points.

posted at: 20:55 | path: /general | permanent link